Here is my list of amazing applications of Generative UI, it’s like a mini blog of all the cool things I’d seen that made me think: “holy s**t”

Contents

Robots!

I’m not sure how much Generative AI was used to make these, but every demo video like this is incredible:

Unitree Go2 New Upgrade

— Unitree (@UnitreeRobotics) July 30, 2024

The evolution of intelligent robots is full of surprises and exciting moments every day. Let's have fun together.🥳#Unitree #AI #EmbodiedIntelligence #AGI #RobotDog #Quadruped pic.twitter.com/GUrABm457s

Text to video

The latest model is from Runway called Gen-3 but it’s been a week of competition:

Introducing Gen-3 Alpha: Runway’s new base model for video generation.

— Runway (@runwayml) June 17, 2024

Gen-3 Alpha can create highly detailed videos with complex scene changes, a wide range of cinematic choices, and detailed art directions.https://t.co/YQNE3eqoWf

(1/10) pic.twitter.com/VjEG2ocLZ8

just 4 days after Luma Labs announced Dream Machine:

Introducing Dream Machine - a next generation video model for creating high quality, realistic shots from text instructions and images using AI. It’s available to everyone today! Try for free here https://t.co/rBVWU50kTc #LumaDreamMachine pic.twitter.com/Ypmacd8E9z

— Luma (@LumaLabsAI) June 12, 2024

and we STILL don’t have OpenAI’s Sora model:

Introducing Sora, our text-to-video model.

— OpenAI (@OpenAI) February 15, 2024

Sora can create videos of up to 60 seconds featuring highly detailed scenes, complex camera motion, and multiple characters with vibrant emotions. https://t.co/7j2JN27M3W

Prompt: “Beautiful, snowy… pic.twitter.com/ruTEWn87vf

tldraw: sketch to running code!

The tldraw demo’s are amazing, it takes a rough sketch and generates working html with styles and JavaScript, to implement your sketch!

I'm shook @tldraw pic.twitter.com/tMm2PbngHw

— Nick St. Pierre (@nickfloats) November 16, 2023

Then with webcam support:

face reveal pic.twitter.com/onkl8Q5HSn

— tldraw (@tldraw) May 20, 2024

Then recently as a dig at apple’s AI calculator:

can your calculator do this? pic.twitter.com/F9WFNqBi0h

— tldraw (@tldraw) June 13, 2024

Whisper: speech to text

Whisper is a modern voice to text model that can even run in your browser: fast cheap transcription.

Introducing Whisper WebGPU: Blazingly-fast ML-powered speech recognition directly in your browser! 🚀 It supports multilingual transcription and translation across 100 languages! 🤯

— Xenova (@xenovacom) June 9, 2024

The model runs locally, meaning no data leaves your device! 😍

Check it out (+ source code)! 👇 pic.twitter.com/hKSoWuC8D2

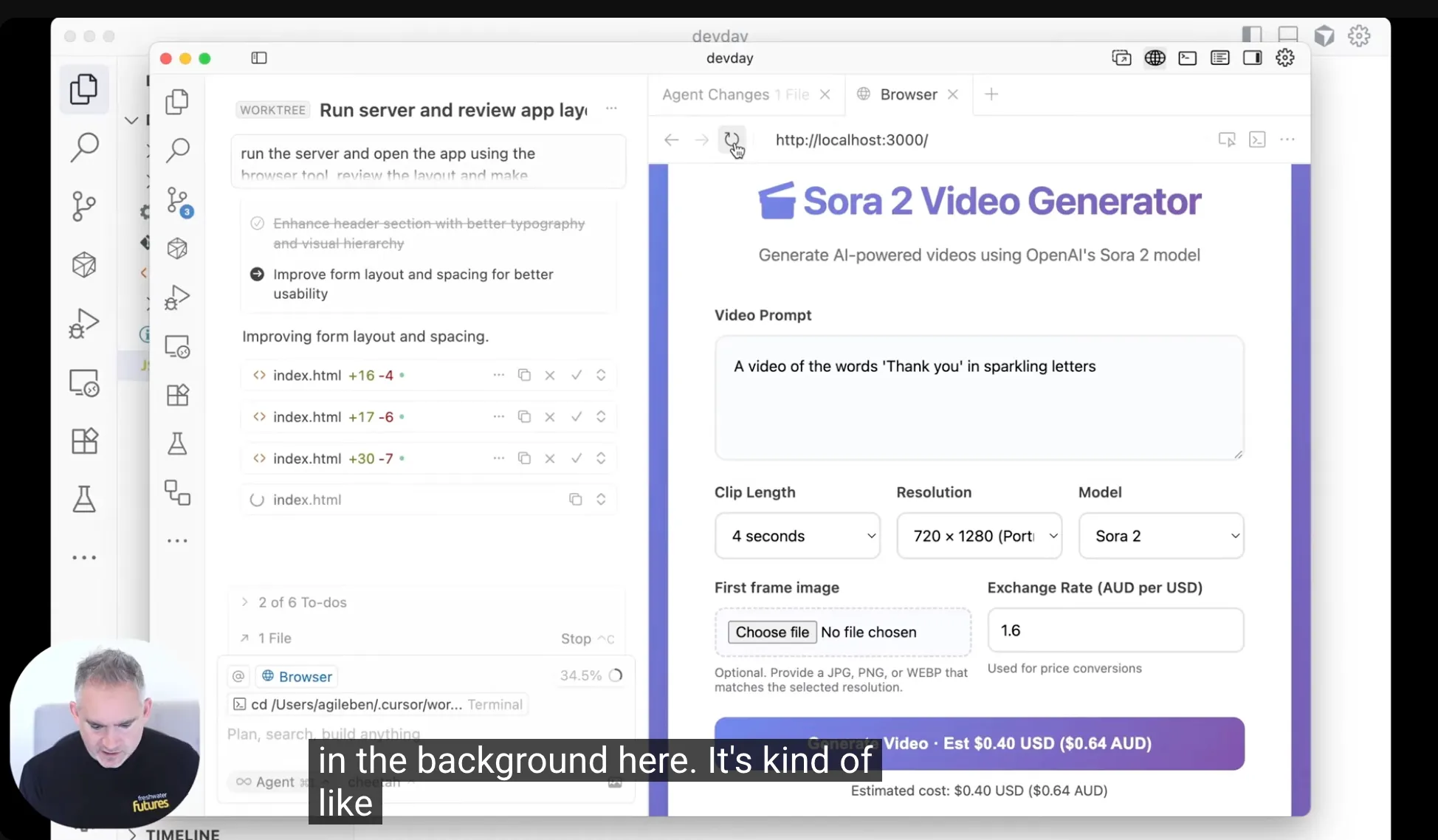

Text to code

From GitHub Copilot to the futuristic IDE Cursor.so we have had text to code for a while, but it’s STILL amazing to go from prompt to running code. Here is the launch tweet, but since then they have added so many amazing features. A must try for any software engineer.

Introducing Cursor!! (https://t.co/wT5wRe22O2)

— Aman Sanger (@amanrsanger) January 18, 2023

A brand new IDE build from the ground up with LLMs. Watch us use Cursor to ship new features blazingly fast. pic.twitter.com/9TqJRq67oJ

Text to application

A agent style tool that takes a prompt and turns it into an entire application: design, code, and github pull request!

Vision to pose detection

Modern vision models can do realtime gesture recognition, in the first demo your webcam can capture your hand position and you can use it to draw in the air!

Been playing around with a simple idea I had. It's still very early (as you can tell from the favicon and web-app name LOL). All you have to do is pinch to draw!

— Johnathan Chiu (@johnathanchewy) May 21, 2024

Feel free to try it out here: https://t.co/8LJpNSoZBR pic.twitter.com/mqQIOMQdkV

and more:

AI advances give old hardware new abilities. https://t.co/tJn6TSEptM

— Louis Anslow (@LouisAnslow) June 19, 2024

Vision (screen) to context

At the GPT-4o Launch OpenAI promised a sidekick that can see your screen and answer questions with that context available. As at 18th June we’re still waiting for this to become available, but others have already launched the same idea across many models:

So we've been cooking the last few weeks. Excited to finally unveil Invisibility: the dedicated MacOS Copilot. Powered by GPT4o, Gemini 1.5 Pro and Claude-3 Opus, now available for free -> @invisibilityinc

— Sulaiman Khan Ghori (@sulaimanghori) May 16, 2024

Added a new video sidekick, enabling seemless context absorbtion.… pic.twitter.com/JPumIZfvq1

Here is OpenAI’s “coming soon” version:

Live demo of coding assistance and desktop app pic.twitter.com/GlSPDLJYsZ

— OpenAI (@OpenAI) May 13, 2024

Stanford on the GenAI Productivity Boost

Compared to a group of workers operating without the tool, those who had help from the chatbot were 14% more productive. Notably, the effect was largest for the least skilled and least experienced workers, who saw productivity gains of up to 35%.

What happens when you unleash generative #AI in a real workplace? This study by Professor @erikbryn aimed to find out. #businessandsociety #tech https://t.co/EkDBK5aEQu

— Stanford Graduate School of Business (@StanfordGSB) May 26, 2024

The rapid and ongoing improvement of AI

An ex OpenAI researcher wrote an analysis of the “stacking” improvements in AI and projected forward to say we could be seeing remarkable “AGI” like capabilities as soon as 2027!

AGI by 2027 is strikingly plausible.

— Leopold Aschenbrenner (@leopoldasch) June 4, 2024

That doesn’t require believing in sci-fi; it just requires believing in straight lines on a graph. pic.twitter.com/dYaqUc1tTM

Speed increases

Meta’s incredible new close-to-gpt-4 power LLM are open weights so you can run it anywhere and is now on Groq - an LLM accelerator provider that runs LLMs really fast at low cost. The speed and cost combo unlocks some amazing use-cases!

What can you do with Llama quality and Groq speed? You can do Instant. That's what. Try Llama 3.1 8B for instant intelligence on https://t.co/1tnDYnUkUi. pic.twitter.com/ZI4kdGXOGC

— Jonathan Ross (@JonathanRoss321) July 23, 2024

Here is a use case: generate a quiz on the fly:

🔥 Super Fast Adaptive Quiz powered by @Groq's Llama 3.1 8B, @Streamlit and Educhain (https://t.co/GYR42QDrdO)!

— Satvik Paramkusham (@satvikps) August 12, 2024

🤯 The quiz gets harder when you're right (and easier when you're wrong)!

Try out the app: https://t.co/CTlBgAqsDX pic.twitter.com/gmZq0hnbsT

Interpreting how LLMs “Think”

Anthropic has some really impressive demos of tools they have built to understand and even modify how an LLM thinks:

The new interpretability paper from Anthropic is totally based. Feels like analyzing an alien life form.

— Thomas Wolf (@Thom_Wolf) May 21, 2024

If you only read one 90-min-read paper today, it has to be this onehttps://t.co/9yecogVqJf pic.twitter.com/AQmR0at95J

And the famous demo where they made Claude think it was literally the Golden Gate Bridge!

If you use the same prompt, but force the Golden Gate Bridge feature to be maximally active, Claude starts believing that it is the bridge itself!

— Emmanuel Ameisen (@mlpowered) May 21, 2024

We repeat this experiment in domains ranging from code to sycophancy, and find similar results. pic.twitter.com/BZmYdUiI7O